In addition, Redshift users could run SQL queries that spanned both data stored in your Redshift cluster and data stored more cost-effectively in S3. With Spectrum, AWS announced that Redshift users would have the ability to run SQL queries against exabytes of unstructured data stored in S3, as though they were Redshift tables. In April 2017, AWS announced a new technology called Redshift Spectrum. That all changed the next month, with a surprise announcement at the AWS San Francisco Summit. However, as of March 2017, AWS did not have an answer to the advancements made by other data warehousing vendors. While the advancements made by Google and Snowflake were certainly enticing to us (and should be to anyone starting out today), we knew we wanted to be as minimally invasive as possible to our existing data engineering infrastructure by staying within our existing AWS ecosystem. The data engineering community has made it clear that these are the capabilities they have come to expect from data warehouse providers. This trend of fully-managed, elastic, and independent data warehouse scaling has gained a ton of popularity in recent years. In addition, both services provide access to inexpensive storage options and allow users to independently scale storage and compute resources.

For both services, the scaling of your data warehousing infrastructure is elastic and fully-managed, eliminating the headache of planning ahead for resources. For example, Google BigQuery and Snowflake provide both automated management of cluster scaling and separation of compute and storage resources. Finding an Elastic ParachuteĪs problems like this have become more prevalent, a number of data warehousing vendors have risen to the challenge to provide solutions. We needed a way to efficiently store this rapidly growing dataset while still being able to analyze it when needed. It simply didn’t make sense to linearly scale our Redshift cluster to accommodate an exponentially growing, but seldom-utilized, dataset. To add insult to injury, a majority of the event data being stored was not even being queried often. We hit an inflection point, however, where the volume of data was growing at such a rate that scaling horizontally by adding machines to our Redshift cluster was no longer technically or financially sustainable. In most cases, the solution to this problem would be trivial simply add machines to our cluster to accommodate the growing volume of data. By the start of 2017, the volume of this data already grew to over 10 billion rows. As our user base has grown, the volume of this data began growing exponentially. We store relevant event-level information such as event name, the user performing the event, the url on which the event took place, etc for just about every event that takes place in the Mode app.

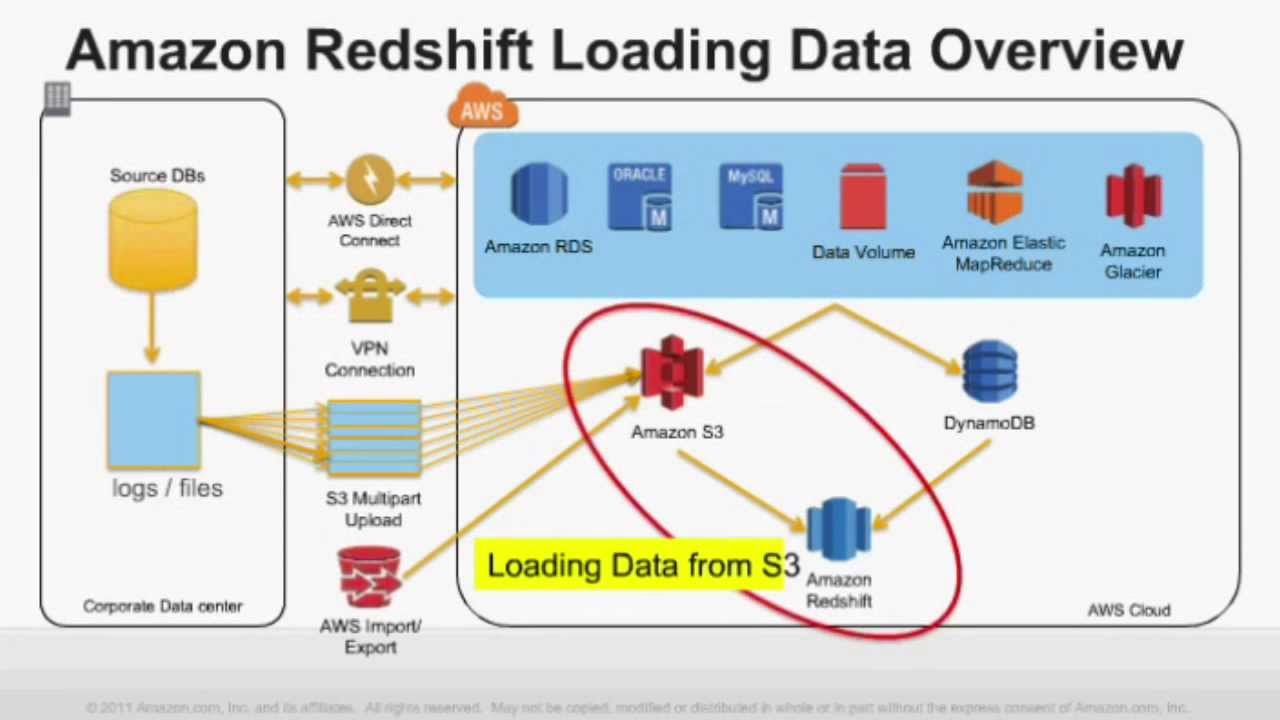

This type of dataset is a common culprit among quickly growing startups. The dataset in question stores all event-level data for our application. Running Off a Horizontal CliffĪfter a brief investigation, we determined that one specific dataset was the root of our problem. Certain data sources being stored in our Redshift cluster were growing at an unsustainable rate, and we were consistently running out of storage resources. Redshift has mostly satisfied the majority of our analytical needs for the past few years, but recently, we began to notice a looming issue. In our early searches for a data warehouse, these factors made choosing Redshift a no-brainer. Redshift enables and optimizes complex analytical SQL queries, all while being linearly scalable and fully-managed within our existing AWS ecosystem. We here at Mode Analytics have been Amazon Redshift users for about 4 years.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed